Why does adding a multiple of an equation to another equation in linear algebra result in row equivalence?

Why is number 3 true? In theory, why does this work? Why are these systems equivalent?

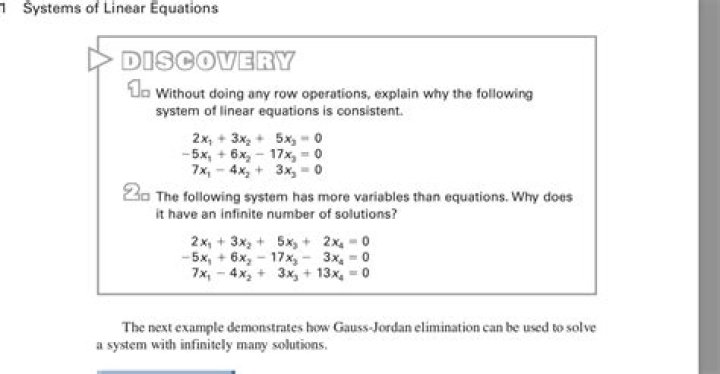

I also don't understand what the above is trying to get at. How do you figure out 1?

$\endgroup$ 13 Answers

$\begingroup$When you have an equation you essentially have the statement $a = b$. When two equations are involved, you also have $c = d$.

Now let's assume both these statements are true. Then we can start with one:

$$a = b$$

Add $rc$ to both sides (this is obviously true), with arbitrary real $r$:

$$a + rc = b + rc$$

And finally use $c = d$ to replace the $c$ on the right hand side:

$$a + rc = b + rd$$

That is, in a system of equations, you can add an arbitrary multiple of an equation to another equation and still have an equivalent system. What is meant by that is iff one system is true then the other must be as well.

$\endgroup$ 2 $\begingroup$For example if we have the equations $3y+2x=6$ and $5y-2x=10$ we can easily find the values of $x$ and $y$ by adding those two equations to get $y$ and then find $x$.

But if we have the equations like $3x+y=9$ and $5x+4y=22$ we cannot just add or subtract these two equations. we need to multiply with the multiple of the other equations in order to make the $x$ or $y$ values equal.

Note that every homogeneous system of linear equations is consistent. If the system has fewer equations than variables, then it is said to have an infinite number of solutions.

$\endgroup$ $\begingroup$Since you said "in theory"... :)

For a more abstract viewpoint (which may be helpful once you get further in your linear algebra studies), note that a system of linear equations

$$ a_{11} x_1 + a_{12} x_2 + \cdots + a_{1m} x_m = b_1 \\ a_{21} x_1 + a_{22} x_2 + \cdots + a_{2m} x_m = b_2 \\ \vdots \\ a_{n1} x_1 + a_{n2} x_2 + \cdots + a_{nm} x_m = b_n $$

can be rewritten as a matrix equation

$$ A \vec{x} = \vec{b}, $$ and that each elementary row operation is equivalent to multiplication on both sides of this equation by an elementary matrix, i.e. changing $A \vec{x} = \vec{b}$ to the modified system $$ E A \vec{x} = E \vec{b}. $$

From this point it is easy to see that if $\vec{x}$ is a solution set to the original system, then it is also a solution to the modified system.

Now you may be asking why we care about solutions to the modified system --- we were trying to solve $A \vec{x} = \vec{b}$, not $E A \vec{x} = E \vec{b}$. Well, it is a basic exercise in linear algebra to show that each type of elementary matrix is invertible (in fact they generate all invertible matrices!), so that if $\vec{x}$ solves the modified system, we can "undo" the elementary matrices and see that $\vec{x}$ solves the original system as well:

$$ E A \vec{x} = E\vec{b} \iff E^{-1} E A \vec{x} = E^{-1} E \vec{b} \iff A \vec{x} = \vec{b}. $$

In short, multiplication by elementary (or invertible) matrices preserves the solution set of the matrix equation. This is the more abstract / theoretical viewpoint of why Gaussian elimination works.

$\endgroup$