What makes a normal distribution asymptotic?

I'm studying Timothy C. Urdan's, Statistics in Plain English, and want to verify my understanding of his definition of a normal distribution. Per volume three,

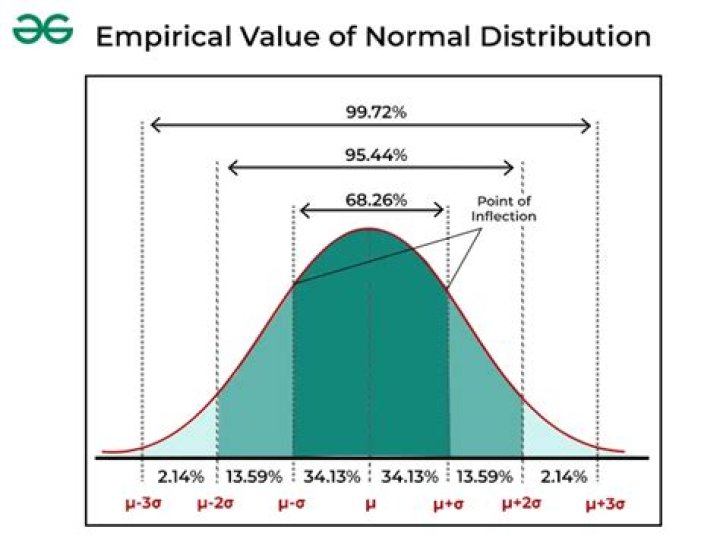

"Normal distribution: A bell-shaped frequency distribution of scores that has the mean, median and mode in the middle of the distribution and is symmetrical and is asymptotic."

By asymptotic, I assume that a normal distributions tails approach, but never actually reach 0. Is my assumption correct? Thank you.

$\endgroup$2 Answers

$\begingroup$An asymptotic distribution is often defined to be a probability distribution that is the limiting distribution of a sequence of distributions. More precisely, consider a sequence of random variables $(X_n)_{n\in \mathbb{N}}$ with associated cumulative distribution functions (CDFs) $F_n$. This sequence is said to converge in distribution to a random variable $X$ if\begin{equation*} \lim_{n\to\infty} F_n(x_n) = F(x), \end{equation*}where $F$ is the CDF of $X$.

The central limit theorem states that if $\mathbb{E}[X_n] = \mu$ and $\text{Var}(X_n)$ is finite, then the sequence of (centered and scaled) sample means, $\sqrt{n}(S_n-\mu), ~ n\in\mathbb{N}$, converges in distribution to $S\sim N(0,\text{Var}(X_n))$, where\begin{equation*} S_n = \frac{1}{n}\sum_{i=1}^n X_i. \end{equation*}Therefore, we see that the normal distribution is asymptotic in the sense of convergence in distribution for the sample mean of random variables.

Edit: In the spirit of the book you're studying, here's a summarization "in plain English": Consider computing the average of a collection of independently and identically distributed random data points. As the number of data points in the collection increases to infinity, we find that the average we compute is distributed normally. It is in this sense that we call the normal distribution asymptotic.

$\endgroup$ 1 $\begingroup$In the context of your quote giving the definition of a normal distribution instead of the normal distribution, I think your tails approach $0$ assumption is correct. Of course, the well known Gaussian distribution satisfies the criteria for being a normal distribution, but many distributions in practice very nearly approach being normal in this sense.

$\endgroup$