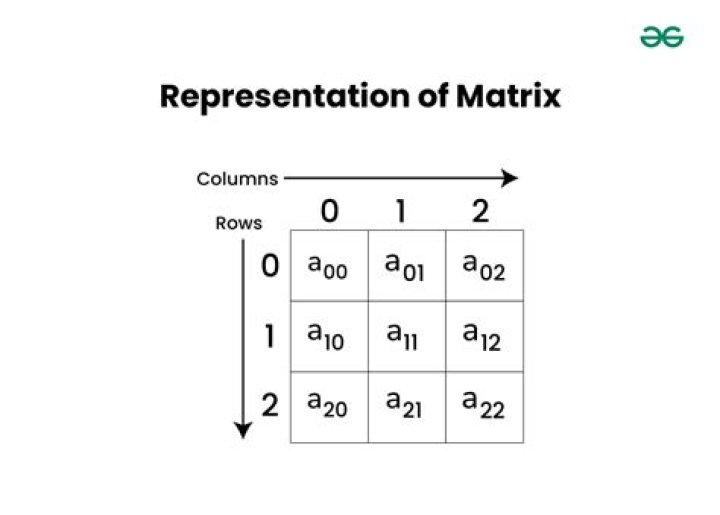

Relationship between the rows and columns of a matrix

I am having trouble understanding the relatioship between rows and columns of a matrix.

Say, the following homogeneous system has a nontrivial solution. $$ 3x_1 + 5x_2 − 4x_3 = 0 \\ −3x_1 − 2x_2 + 4x_3 = 0 \\ 6x_1 + x_2 − 8x_3 = 0\\ $$ Let A be the coefficient matrix and row reduce $\begin{bmatrix} A & \mathbf 0 \end{bmatrix}$ to row-echelon form:

$\begin{bmatrix}3&5&-4&0\\-3&-2&4&0\\6&1&-8&0\\ \end{bmatrix} \rightarrow \begin{bmatrix}3&5&-4&0\\0&3&0&0\\0&0&0&0\\ \end{bmatrix}$

$\quad a1 \quad a2 \quad \,a3$

Here, we see $x_3$ is a free variable and thus we can say 3rd column,$\,a_3$, is in $\text{span}(a_1, a_2)$

But what does it mean for an echelon form of a matrix to have a row of $0$'s?

Does that mean 3rd row can be generated by 1st & 2nd rows?

just like 3rd column can be generated by 1st & 2nd columns?

And this raises another question for me, why do we mostly focus on columns of a matrix?

because I get the impression that ,for vectors and other concepts, our only concern is

whether the columns span $\mathbb R^n$ or the columns are linearly independent and so on.

I thought linear algebra is all about solving a system of linear equations,

and linear equations are rows of a matrix, thus i think it'd be logical to focus more on rows than columns. But why?

$\endgroup$ 43 Answers

$\begingroup$Having a row of $0$'s in the row-echelon form means that we were able to write the third row of $A$ as a linear combination of the second and first rows. As it so happens for square matrices, this is true precisely when we can write the columns as a linear combination of each other (that is, when the columns are not linearly independent). If you further reduce this to reduced row-echelon form, you get$$\begin{bmatrix} 1 & 0 & -4/3 & 0\\ 0 & 1 & 0 & 0 \\ 0 & 0 & 0 & 0 \end{bmatrix}$$Because the third column lacks a pivot, $x_3$ is our free variable, which means that we can write $a_3$ as a linear combination of the other two columns.

There's a very good reason for focusing on the columns of a matrix. This comes out of using $A$ as a linear transformation, where the "column space" gives us the "range" of the function $f(\vec x) = A \vec x$.

$\endgroup$ 2 $\begingroup$Excellent question.

In some sense, the equations and variables represent equivalent information. Sometimes it is easier to approach the problem from the point of view of rows-equations-constraints, and sometimes - from the point of view of variables.

This is very deeply related to the notion of the dual problem in linear programming. That is exactly what converts equations/constraints to rows and vice versa, looking at a different problem.

Mathematically, this boils down to either working with the matrix $A$ or with some form of $A^T$, which converts rows into columns and columns into rows. Not surprisingly, the main characteristic properties for $A$ and $A^T$ are the same, like rank, eigenvalues/singular values, determinant, trace, etc.

Two reference links on duality:

$\endgroup$ $\begingroup$Clearly, you matrix is singular, i.e. its determinant is zero. When we study the linear system $Ax=b$, we have two options: either there's an affine space of solutions ($b\in Im A$) or there're no solutions at all.

When you study the echolon form, you eventually arrive to the line of the form $\begin{pmatrix}0&0&0&a\end{pmatrix}$. If $a\ne 0$, then the system is incompatible (zero combination of variables produces non-zero). If $a=0$, then you have an affine subspace of solutions.

$\endgroup$