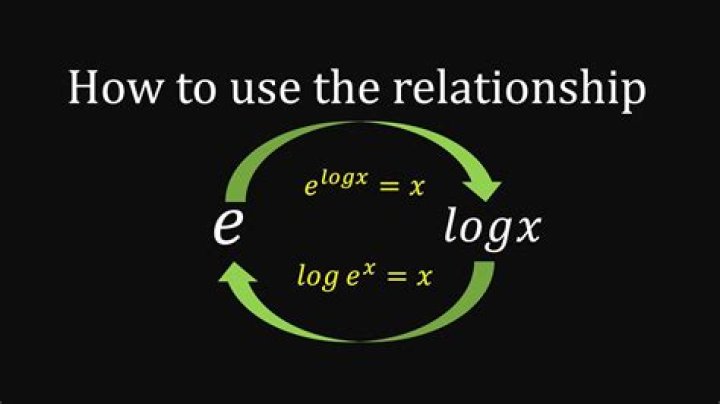

relationship between E[log(X)] and E[X]

$X_1 X_2$ are two random variables with their expectations $E[X_1]$ $E[X_2]$ and variances $Var[X_1]$ $Var[X_2]$ respectively. $$X_1=\sum_{i}\lambda_i(v_i+m_i)^{2}$$ $$X_2=\sum_{i}\lambda_i(v_i+n_i)^{2}$$ $v_i$ are i.i.d standard Gaussian distributions with 0 mean and variance 1.

If we have $E[X_1]\lt E[X_2]$ and $Var[X_1]=Var[X_2]$ by applying particular $m_i$ and $n_i$. My question is:

Can we conclude that $$E[\log X_1]\lt E[\log X_2]?????$$

Thank you:)

$\endgroup$ 31 Answer

$\begingroup$If $Y_i \sim N(m_i,1)$ then the CF of $Y_i$ is $f_i(t)=e^{-m_i i t}e^{-t^2/2}$, hence: $$\mathbb{E}[Y_i^2]=-\frac{d^2}{dt^2}\left.f_i(t)\right|_{t=0}=m_i^2+1, $$ $$\mathbb{E}[Y_i^4]=\frac{d^4}{dt^4}\left.f_i(t)\right|_{t=0}=m_i^4+6m_i^2+3, $$ $$\operatorname{Var}[Y_i^2]=4m_i^2+2.$$ This gives that the condition $\mathbb{E}[X_1]<\mathbb{E}[X_2]$ translates into: $$ \sum \lambda_i m_i^2 < \sum \lambda_i n_i^2 $$ and the condition $\operatorname{Var}[X_1]=\operatorname{Var}[X_2]$ translates into: $$ \sum \lambda_i^2 m_i^2 = \sum \lambda_i^2 n_i^2. $$

Assuming that $X$ is a positive random variable with mean $\mu$, variance $\sigma^2$, a well-behaved CF and small skewness we have: $$\log X = \log\mu + \log\frac{X}{\mu} = \log\mu + \log\left(1+\frac{X-\mu}{\mu}\right)=\log\mu+\sum_{k=1}^{+\infty}(-1)^{k+1}\frac{(X-\mu)^k}{k\,\mu^k}$$ and by taking expected values we get: $$ \mathbb{E}[\log X] \approx \log\mu -\frac{\sigma^2}{2\mu^2}.$$ In this context, from $\mu_1<\mu_2$ and $\sigma_1=\sigma_2$ the inequality $\mathbb{E}[\log X_1]<\mathbb{E}[\log X_2]$ should follow. However, to prove it for real we need good bounds for the higher central moments of $X_1$ and $X_2$.

$\endgroup$ 2