Proof of equivalence of two ways of calculating directional derivative

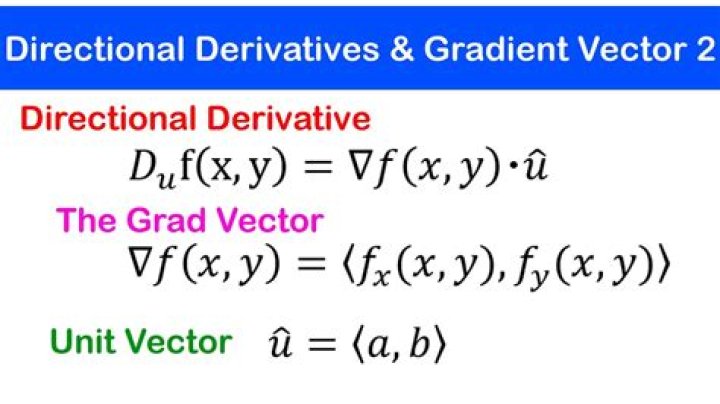

I am seeking the connection between two formulas that I saw to compute the directional derivative of function $f$ in the direction of a vector $\vec v$.

One of them is :

$$\nabla_{\vec v} f(\vec x_0) = \lim_{h\to 0} \frac{f(\vec x_0+h\vec v)−f(\vec x_0)}{h}$$

and the other one is

$$\nabla_{\vec v} f(x,y)=\nabla f\cdot \vec v$$

Also, will I have to use a unit vector to compute the directional derivative and the slope?

Thank you and cheers :)

$\endgroup$ 23 Answers

$\begingroup$Let $f: D\subseteq \Bbb R^n \to \Bbb R$ be differentiable. Then

$$\begin{align}\require{cancel}\nabla_{\vec v} f(\vec x_0) &= \lim_{h\to 0} \frac{f(\vec x_0 + h\vec v)-f(\vec x_0)}{h} \\ &= \lim_{h\to 0} \frac{\left(\color{red}{\cancel {\color{black}{f(\vec x_0)}}}+\nabla f(\vec x_0)\cdot (h\vec v) + o(h)\right) - \color{red}{\cancel {\color{black}{f(\vec x_0)}}}}{h} \\ &= \lim_{h\to 0} \frac{\color{red}{\cancel {\color{black}{h}}}\left[\nabla f(\vec x_0)\cdot (\vec v)\right]}{\color{red}{\cancel {\color{black}{h}}}} + \cancelto{0}{\lim_{h\to 0}\frac{o(h)}{h}} \\ &= \nabla f(\vec x_0) \cdot \vec v\end{align}$$

You don't have to use a unit vector to calculate the directional derivative, but the dd will only correspond to the geometric idea of slope if you use a unit vector $\vec v$.

Edit: I assume that you are familiar with Taylor's theorem. Recall that the first order Taylor expansion of a function $g: \Bbb R\to \Bbb R$ around $a$ is

$$g(a+h) = g(a) + g'(a)h + o(h)$$

Here $o(h)$ is a stand-in for the remainder function $g(a+h)-g(a)-g'(a)h$. This notation (called little oh notation) tells us that the remainder has the property $$\lim_{h\to 0}\frac{g(a+h)-g(a)-g'(a)h}{h} = 0.$$

For functions of a vector variable, there's a similar Taylor expansion:

$$f(\vec a + \vec h) = f(\vec a) + \nabla f(\vec a)\cdot \vec h + o(\|\vec h\|)$$

So what I'm doing above is replacing $f(\vec x_0 + h\vec v)$ with its first order Taylor expansion. Then two terms cancel, one tends to zero, and we're left with the identity you're looking for.

$\endgroup$ 2 $\begingroup$Another way to intuatively prove this, is take a scalar function F(x,y)

and a vector function R(t)= x(t) i + y(t)j

substituting x(t),y(t) into F yields F(x(t),y(t))

this obviously represents the values of F along the vector path

Differentiating F with respect to T using the chain rule

dF/dt= (dF/dx)(dx/dt) + (dF/dy)(dy/dt)

this represents the RATE OF CHANGE of F along the vector path R

Notice that the expression for dF/dt is identicle to a dot product between the vectors (df/dx, df/dy) and (dx/dt , dy/dt)

these vectors are identical to Grad F and R'(t)

so what does this mean? we have found that the vectors Grad F (dot) R'(t) represent the rate of change of F in the direction of R(t) well what is the direction of R(t)? clearly its R'(t) so this equation represents the rate of change of F at a point T in the direction of R'(t).

So all we have to do to get the directional derivative is to "artifically" construct a random function R(t) such that at a point T, which corresponds to a point (x,y), the direction of R(t) points in the direction of a random vector V that you want to find the derivative in the direction of. THIS IS IDENTICLE to assuming that at a point x,y the vector R'(t) is just a random vector V

thus you get Grad F (dot) V

corresponding to a rate of change of F in the direction of V

This is exactly HOW grad F is derived, as to maximise the equation grad F (dot) V.

then V must be in then direction of grad F ( as its a dot product) which means that the MAXIMUM rate of change would be a vector that points in the direction of grad F. substituting v = grad(f)/abs( grad f) into the directional derivative ewuation gets u that then MAXIMUM rate of change of F is abs(grad F) which is why the grad F has the property of its.magnitude being the maximum rate of change

$\endgroup$ $\begingroup$I believe the following works. It's a more direct proof inspired by this paper.

Let $\vec{u}=(u_1,u_2)$ be a unit vector and $f:\mathbb{R}^2\rightarrow \mathbb{R}$ a function differentiable at $(a,b)$. The result is easy to prove when either $u_1$ or $u_2$ is zero (see the paper for more info), so I'll assume $u_1,u_2\neq 0$.

$$\frac{\partial f}{\partial \vec{u}}:=\lim_{h\rightarrow 0}\frac{f(a+hu_1,b+hu_2)-f(a,b)}{h}$$

$$=\lim_{h\rightarrow 0}\frac{f(a+hu_1,b+hu_2)-f(a,b+hu_2)}{hu_1}u_1+\lim_{h\rightarrow 0}\frac{f(a,b+hu_2)-f(a,b)}{hu_2}u_2$$

The right-most term is clearly equal to $\partial _yf(a,b)u_2$. Applying the Mean Value Theorem on the left-most term we have

$$=\lim_{h\rightarrow 0}\partial_xf(\alpha(h),b+hu_1)u_1+\partial _yf(a,b)u_2$$

where $\alpha(h)$ is between $a$ and $a+hu_1$, meaning $\alpha(h)\rightarrow a$ as $h\rightarrow 0$. Thus the above is equal to

$$\partial_xf(a,b)u_1+\partial _yf(a,b)u_2$$

as wanted.

The proof can be generalized to other dimensions by expanding $\partial f/\partial \vec{u}$ so that each term varies only in one of its dimensions. For example, for a function $f:\mathbb{R}^3\rightarrow \mathbb{R}$ we would begin the proof by expanding $\partial f/\partial \vec{u}$ as

$$\frac{f(a+hu_1,b+hu_2,c+hu_3)-f(a,b+hu_2,c+hu_3)}{hu_1}u_1 +\frac{f(a,b+hu_2,c+hu_3)-f(a,b,c+hu_3)}{hu_2}u_2 +\frac{f(a,b,c+hu_3)-f(a,b,c)}{hu_3}u_3$$

and we would need to use the Mean Value Theorem in all terms except the last.

$\endgroup$