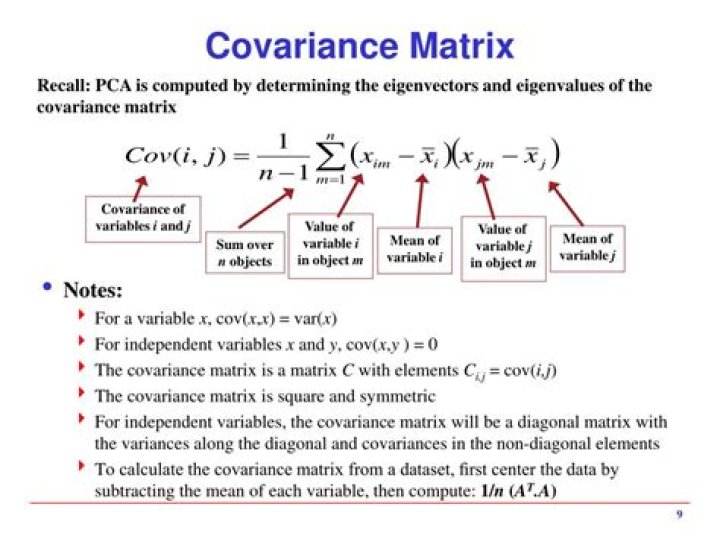

Proof of Covariance

I am dealing extremely often with the covariance during my statistics classes. However, the only proof I have found so far is that. My question is, how to deal with the double integral of the covariance or is there another proof for the covariance?

\begin{align}\text{Cov}(X,Y)& = \mathbb{E}(X- \mathbb{E}(X))(Y- \mathbb{E}(Y))\\ &= \int_{-\infty}^{\infty}\int_{-\infty}^{\infty}\;(x- \mathbb{E}(X))(y- \mathbb{E}(Y))f(x,y)dx\;dy\\&= \mathbb{E}(XY)- \mathbb{E}(X)\mathbb{E}(Y)\; .\end{align}

UPDATE

Could that also be true?

\begin{align}\text{Cov}(X,Y)&=\mathbb{E}(XY -X\mathbb{E}Y -Y\mathbb{E}X + \mathbb{E}X\mathbb{E}Y)\\&=\mathbb{E}(XY)- \mathbb{E}X\mathbb{E}Y -\mathbb{E}Y\mathbb{E}X + \mathbb{E}X \mathbb{E}Y\\&= \mathbb{E}(XY)- \mathbb{E}X \mathbb{E}Y \; . \end{align}

$\endgroup$ 02 Answers

$\begingroup$I wish you had asked this question on stats.SE where you essentially have a very similar question to which @cardinal's comment provides the answer. But, to cross the $i$'s and dot the $t$'s, the notion of covariance applies to random variables $X$ and $Y$ that enjoy the property that $E[X^2]$ and $E[Y^2]$ are finite. This property implies that the random variables have finite means that we can denote by $\mu_X$ and $\mu_Y$ respectively, and also finite variances that we denote by $\sigma_X^2$ and $\sigma_Y^2$. For such random variables, the covariance $\operatorname{cov}(X,Y)$ of$X$ and $Y$ is defined as $$\operatorname{cov}(X,Y) = E[(X-\mu_X)(Y-\mu_Y)]$$ where the expectation shown exists and has finite value because of our assumption that $E[X^2]$ and $E[Y^2]$ are finite. In fact, $$|\operatorname{cov}(X,Y)| \leq \sigma_X\sigma_Y.$$ Now, if we multiply out the right hand side in the definition and use the linearity of expectation $$E\left[a + \sum_i b_iX_i\right] = a + \sum_i b_iE[X_i],$$ we have that $$\begin{align} \operatorname{cov}(X,Y) &= E[(X-\mu_X)(Y-\mu_Y)]\\ &= E[XY - \mu_XY - \mu_YX + \mu_x\mu_Y]\\ &= E[XY] - \mu_XE[Y] - \mu_YE[X] + \mu_x\mu_Y\\ &= E[XY] - \mu_x\mu_Y - \mu_x\mu_Y + \mu_x\mu_Y\\ &= E[XY] - \mu_x\mu_Y\\ &= E[XY] - E[X]E[Y] \end{align}$$ without worrying about discrete or continuous random variables and whether we have integrals or sums, etc.

$\endgroup$ $\begingroup$Hint: Use $\mathbb{E}(X+Y)=\mathbb{E}(X)+\mathbb{E}(Y)$, and expand the right hand side of your original equation.

The integral form only works for the continuous case. What if you have a discrete case? say $X\sim Bernouli(1/2)$.

$\endgroup$ 2