Probability space in convergence in probability and convergence in distribution

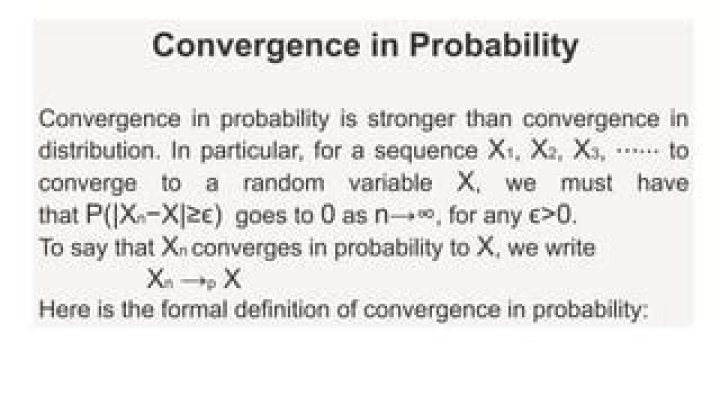

From wiki, the definition of convergence in probability:

A sequence $\lbrace X_{n}\rbrace$ of rv converges in probability towards the random $X$ if for all $\epsilon >0$, $\lim_{n \rightarrow \infty} P(|X_{n}-X| > \epsilon) = 0$

Since I have just started reading measure theory + stochastic process, this makes me feel very confused, which $P$, and consequently which $\Omega, \mathcal{F}$ is being used in different convergence modes.

From the tiny understanding I have from stochastic process, all $X_{n}$ lie in the one same probability space $(\Omega, \mathcal{F}, P)$? From $P$ one can recover the marginal of each individual $X_{n}$, and the probability calculated above must be dealing with $X_{n}$ and $X$ jointly? Most examples I found doing the actual proof of convergence in probability mainly deals with the case where $X$ is a constant.

Then what about convergence in distribution. From van der Vaart page 258 (Asymptotic Statistics), it seems that each $X_{n}$ does not have to lie in the same probability spaces.

We extend the preceding definition to a sequence of arbitrary maps $X_{n}: \Omega_{n} \mapsto \mathbb{D}$ defined on probability spaces $(\Omega_{n}, \mathcal{U}_{n}, P_{n})$

We say that a sequence of arbitrary maps $X_{n}: \Omega_{n} \mapsto \mathbb{D}$ converges in distribution to a random element $X$ if $E^{*}f(X_{n}) \rightarrow E f(X)$ for every bounded, continuous function $f: \mathbb{D} \mapsto \mathbb{R}$

And yes there is the outer expectation that is defined

For an arbitrary map $X: \Omega \mapsto \mathbb{D}$, $E^{*}f(X) = \inf \lbrace EU: U: \Omega \mapsto \mathbb{R} \text{, measurable, } U \geq f(X), EU \text{ exists} \rbrace $

So in the expression $E^{*}f(X_{n}) \rightarrow E f(X)$, the $E^{*}f(X_{n})$ is defined using $P$ (where $X$ lies in the probability space $(\Omega, \mathcal{F}, P)$ I guess) or $P_{n}$, and the $Ef(X)$ using $P$?

Later he defines convergence in probability using outer probability, and now I again have trouble understanding $P$ is the law for $X_{n}$ or for $X$ or the joint.

An arbitrary sequence of maps $X_{n}: \Omega_{n} \mapsto \mathbb{D}$ converges in probability to $X$ if $P^{*}(d(X_{n},X) > \epsilon) \rightarrow 0$ for all $\epsilon >0$

I'm trying my best to express my issue but as you can see in the writing, it is all muddy now.

$\endgroup$ 21 Answer

$\begingroup$For most problems working with random variables $X, Y, Z$, $\{X_n\}$, and so on, it is easiest to simply assume they are all on the same probability space. This is essential for expressions like $P[|X_n-X|>\epsilon]$ or $P[d(X_n,X)>\epsilon]$, since these implicitly assume $X$ and $X_n$ are on the same probability space (else, the events $\{|X_n-X|>\epsilon\}$ and $\{d(X_n,X)>\epsilon\}$ would not make sense). So you can write $X$ and $X_n$ more formally as $X(\omega)$ and $X_n(\omega)$ for outcomes $\omega \in S$, where $S$ is the sample space of a single probability experiment.

The case of convergence in distribution makes limiting statements with expressions that involve only individual variables $X_n$ or $X$, with no expressions including two variables together. The author of your book wants to exploit this property to generalize: allowing the possibility of different probability spaces. And so the author likely is assuming the limiting variable $X$ also has its own space (from which you can evaluate probabilities and expectations associated with $X$). However, this generalization to everything having its own space does not seem very important. If it helps, you can restrict your focus, even in the "convergence in distribution" definition, to the case when all random variables $X, X_1, X_2, ...$ lie on the same space.

Strictly speaking, if we have random variables $X_1, X_2, ...$ on different spaces $S_1, S_2, ...$, we could bring them together by defining a product space $S_1 \times S_2 \times ...$, and then assume $\{X_i\}_{i=1}^{\infty}$ are mutually independent. So in this way we can imagine them all on the same space even if they originally are not.

Philosophically, you could imagine a huge probability space defined by the space-time of the universe, in which case the event $\{X>5\}$ is on the same space as the event that I get up and order another cup of coffee.

It might be interesting if someone could give an example where treating things in different spaces would be important (I cannot think of one).

$\endgroup$ 1