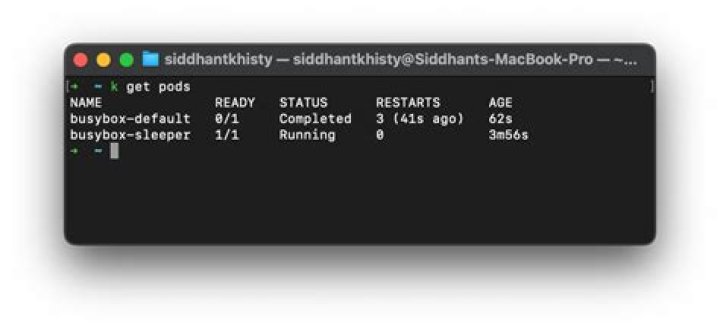

kubectl get pod READY 0/1 state

I am following a lab on Kubernetes and Mongodb but all the Pods are always in 0/1 state what does it mean? how do i make them READY 1/1

[root@master-node ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

mongo-express-78fcf796b8-wzgvx 0/1 Pending 0 3m41s

mongodb-deployment-8f6675bc5-qxj4g 0/1 Pending 0 160m

nginx-deployment-64bd7b69c-wp79g 0/1 Pending 0 4h44mkubectl get pod nginx-deployment-64bd7b69c-wp79g -o yaml

[root@master-node ~]# kubectl get pod nginx-deployment-64bd7b69c-wp79g -o yaml

apiVersion: v1

kind: Pod

metadata: creationTimestamp: "2021-07-27T17:35:57Z" generateName: nginx-deployment-64bd7b69c- labels: app: nginx pod-template-hash: 64bd7b69c name: nginx-deployment-64bd7b69c-wp79g namespace: default ownerReferences: - apiVersion: apps/v1 blockOwnerDeletion: true controller: true kind: ReplicaSet name: nginx-deployment-64bd7b69c uid: 5b1250dd-a209-44be-9efb-7cf5a63a02a3 resourceVersion: "15912" uid: d71047b4-d0e6-4d25-bb28-c410639a82ad

spec: containers: - image: nginx:1.14.2 imagePullPolicy: IfNotPresent name: nginx ports: - containerPort: 8080 protocol: TCP resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - mountPath: /var/run/secrets/ name: kube-api-access-2zr6k readOnly: true dnsPolicy: ClusterFirst enableServiceLinks: true preemptionPolicy: PreemptLowerPriority priority: 0 restartPolicy: Always schedulerName: default-scheduler securityContext: {} serviceAccount: default serviceAccountName: default terminationGracePeriodSeconds: 30 tolerations: - effect: NoExecute key: operator: Exists tolerationSeconds: 300 - effect: NoExecute key: operator: Exists tolerationSeconds: 300 volumes: - name: kube-api-access-2zr6k projected: defaultMode: 420 sources: - serviceAccountToken: expirationSeconds: 3607 path: token - configMap: items: - key: ca.crt path: ca.crt name: kube-root-ca.crt - downwardAPI: items: - fieldRef: apiVersion: v1 fieldPath: metadata.namespace path: namespace

status: conditions: - lastProbeTime: null lastTransitionTime: "2021-07-27T17:35:57Z" message: '0/1 nodes are available: 1 node(s) had taint { }, that the pod didn''t tolerate.' reason: Unschedulable status: "False" type: PodScheduled phase: Pending qosClass: BestEffortkubectl describe pod nginx-deployment-64bd7b69c-wp79g

[root@master-node ~]# kubectl get pod nginx-deployment-64bd7b69c-wp79g -o yaml

apiVersion: v1

kind: Pod

metadata: creationTimestamp: "2021-07-27T17:35:57Z" generateName: nginx-deployment-64bd7b69c- labels: app: nginx pod-template-hash: 64bd7b69c name: nginx-deployment-64bd7b69c-wp79g namespace: default ownerReferences: - apiVersion: apps/v1 blockOwnerDeletion: true controller: true kind: ReplicaSet name: nginx-deployment-64bd7b69c uid: 5b1250dd-a209-44be-9efb-7cf5a63a02a3 resourceVersion: "15912" uid: d71047b4-d0e6-4d25-bb28-c410639a82ad

spec: containers: - image: nginx:1.14.2 imagePullPolicy: IfNotPresent name: nginx ports: - containerPort: 8080 protocol: TCP resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - mountPath: /var/run/secrets/ name: kube-api-access-2zr6k readOnly: true dnsPolicy: ClusterFirst enableServiceLinks: true preemptionPolicy: PreemptLowerPriority priority: 0 restartPolicy: Always schedulerName: default-scheduler securityContext: {} serviceAccount: default serviceAccountName: default terminationGracePeriodSeconds: 30 tolerations: - effect: NoExecute key: operator: Exists tolerationSeconds: 300 - effect: NoExecute key: operator: Exists tolerationSeconds: 300 volumes: - name: kube-api-access-2zr6k projected: defaultMode: 420 sources: - serviceAccountToken: expirationSeconds: 3607 path: token - configMap: items: - key: ca.crt path: ca.crt name: kube-root-ca.crt - downwardAPI: items: - fieldRef: apiVersion: v1 fieldPath: metadata.namespace path: namespace

status: conditions: - lastProbeTime: null lastTransitionTime: "2021-07-27T17:35:57Z" message: '0/1 nodes are available: 1 node(s) had taint { }, that the pod didn''t tolerate.' reason: Unschedulable status: "False" type: PodScheduled phase: Pending qosClass: BestEffort

[root@master-node ~]# kubectl describe pod nginx-deployment-64bd7b69c-wp79g

Name: nginx-deployment-64bd7b69c-wp79g

Namespace: default

Priority: 0

Node: <none>

Labels: app=nginx pod-template-hash=64bd7b69c

Annotations: <none>

Status: Pending

IP:

IPs: <none>

Controlled By: ReplicaSet/nginx-deployment-64bd7b69c

Containers: nginx: Image: nginx:1.14.2 Port: 8080/TCP Host Port: 0/TCP Environment: <none> Mounts: /var/run/secrets/ from kube-api-access-2zr6k (ro)

Conditions: Type Status PodScheduled False

Volumes: kube-api-access-2zr6k: Type: Projected (a volume that contains injected data from multiple sources) TokenExpirationSeconds: 3607 ConfigMapName: kube-root-ca.crt ConfigMapOptional: <nil> DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: op=Exists for 300s op=Exists for 300s

Events: Type Reason Age From Message ---- ------ ---- ---- ------- Warning FailedScheduling 2m53s (x485 over 8h) default-scheduler 0/1 nodes are available: 1 node(s) had taint { }, that the pod didn't tolerate.1 Answer

Your node is unavailable; it states in the message:

message: '0/1 nodes are available: 1 node(s) had taint { }, that the pod didn''t tolerate.'You can check the availability of your nodes with the following command:

kubectl get nodes -o wideAlso it's easier to figure if you just use the default output from kubectl describe pod command instead of using yaml output.