Intuition on Wald's equation without using the optional stopping theorem.

The Wald's equation even at its simplest form as stated below simplifies many problems of calculating expectation.

Wald's Equation: Let $(X_n)_{n\in\mathbb{N}}$ be a sequence of real-valued, independent and identically distributed random variables and let $N$ be a nonnegative integer-value random variable that is independent of the sequence $(X_n)_{n\in\mathbb{N}}$. Suppose that $N$ and the $X_n$ have finite expectations. Then $$ \operatorname{E}[X_1+\dots+X_N]=\operatorname{E}[N] \cdot\operatorname{E}[X_n]\quad \forall n\in\mathbb{N}\,. $$

I am looking for an intuitive explanation of Wald's equation without using the optional stopping theorem. I'm not interested in explanations for the error $ \operatorname{E}[X_1+\dots+X_N]=N\cdot \operatorname{E}[X_1] $ or explanations to discuss only the hypotheses.

We could have, for exemple, a function $\varphi$ of two or more variables such that $\operatorname{E}[X_1+\dots+X_N]=\varphi\big(\operatorname{E}[N]\, ,\,\operatorname{E}[X_n]\big), \quad\forall n\in\mathbb{N}\,$. The question then becomes for what reason $\varphi(x,y)$ equals $x\cdot y$?

More generally we could have two linear functional $F : L^1(\Omega,\mathcal{A},P)\to \mathbb{R}$ and $G : L^1(\Omega,\mathcal{A},P)\to \mathbb{R}$ such that $\operatorname{E}[X_1+\dots+X_N]=\varphi\big(\operatorname{F}[N]\, ,\,\operatorname{G}[X_n]\big), \quad\forall n\in\mathbb{N}\,.$ So the question would be for what reason $\operatorname{F}=\operatorname{G}=\operatorname{E}$ and $\varphi(x,y)$ equals $x\cdot y$?

The interest is on the intuition of the equation. An answer based on a good example will be very welcome.

Thanks in advance.

$\endgroup$ 42 Answers

$\begingroup$One simple intuitive explanation is that $$ \mathbb{E}[X_1+\cdots+X_N | N=n] = n\mathbb{E}[X_1], $$ so it follows that $$ \mathbb{E}[X_1+\cdots+X_N] = \mathbb{E}[\mathbb{E}[X_1+\cdots+X_N|N]] = \mathbb{E}[N \mathbb{E}[X_1]] = \mathbb{E}[N] \mathbb{E}[X_1]. $$ This works because they are independent, so you just take $N$ copies of the same r.v. The identity is not quite as trivial when $N$ is a stopping time.

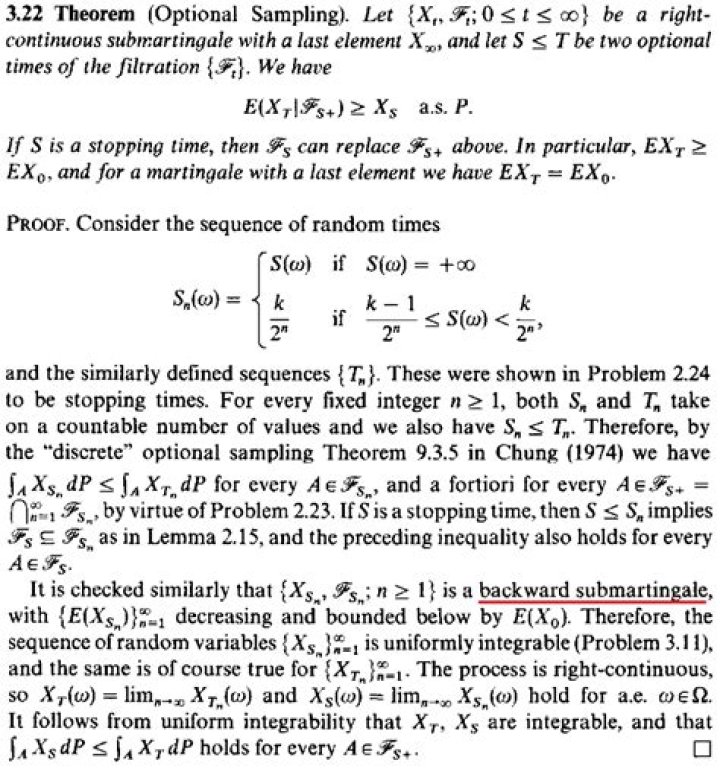

$\endgroup$ 2 $\begingroup$Here is a martingale approach to give some intuition, not to prove the theorem:

Consider a Random Walk $$S_n = S_0 + X_1 + ... + X_n$$ where $X_i$ are iid with mean $E(X_i) = \mu$. We can easily prove for a vector A of previous observations, that $$M_n = S_n - n\mu$$ is a martingale, namely: $$E[M_{n+1} - M_{n} | A] = E[ S_{n+1} - (n+1)\mu - S_n + n\mu |A]$$ $$ = E[X_{n+1} - \mu |A] = E[X_{n+1}] - E\mu = \mu - \mu = 0$$ Then, intuitively $$ S_n - S_0 = X_1 + ... + X_n $$ Take expectations in both sides and since $X_i$s are independent we get: $$E[S_n] - E[S_0] = E[X_1] + ... + E[X_n] $$ or $$ E[S_n - S_0] = \mu + ... + \mu = n \mu $$ However, Wald assumes time n to be random variable by itself, and since T is stopping time in the theorem with $P(T < \infty) = 1$ and $ET < \infty$, we care about the minimum between T and n, because we have to stop the walk at time T. Thus as $n \to \infty$ $$ \min(T,n) \to T $$ and finally with $n \to \infty$ $$ E[S_{min(T,n)} - S_0] = \mu E(min(T,n))$$ becomes $$ E[S_T - S_0] = \mu ET$$

There is more theory to consider in the above, like that we have an increasing limit and thus we can pass in the expectation so that $E[S_T - S_{min(T,n)}] \le \sum_{m = n}^T |X_m| \cdot P(T > n) \to 0$ etc. but all that are part of a rigorous proof. I hope that helps :))

$\endgroup$