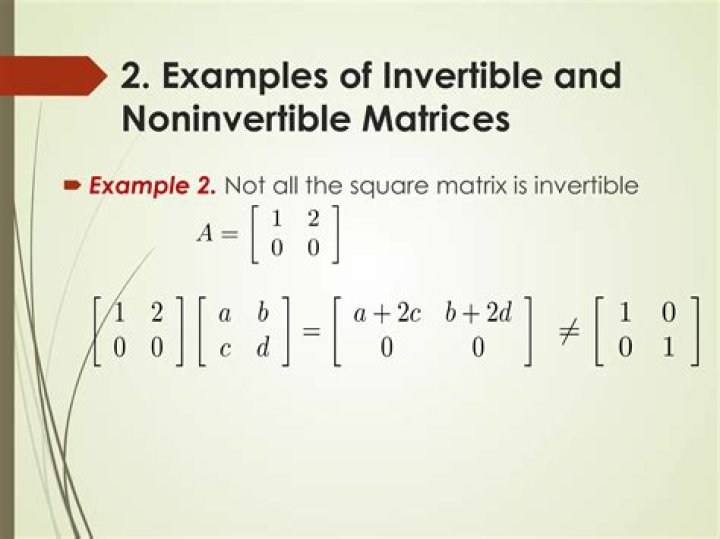

Intuition behind Matrix being invertible iff determinant is non-zero

I have been wondering about this question since I was in school. How can one number tell so much about the whole matrix being invertible or not?

I know the proof of this statement now. But I would like to know the intuition behind this result and why this result is actually true.

The proof I have in mind is:

If $A$ is invertible, then

$$ 1 = \det(I) = \det(AA^{-1}) = \det(A)\cdot\det(A^{-1})$$

whence $\det(A) \neq 0$.

Conversely, if $\det(A) \neq 0$, we have

$$ A adj(A) = adj(A)A = \det(A)I$$

whence $A$ is invertible.

$adj(A)$ is the adjugate matrix of $A$.

$$ adj(A)_{ji} = (-1)^{i+j}\det(A_{ij})$$

where $A_{ij}$ is the matrix obtained from $A$ by deleting $ith$ row and $jth$ column.

Any other insightful proofs are also welcome.

$\endgroup$ 17 Answers

$\begingroup$Here's an explanation for three dimensional space ($3 \times 3$ matrices). That's the space I live in, so it's the one in which my intuition works best :-).

Suppose we have a $3 \times 3$ matrix $\mathbf{M}$. Let's think about the mapping $\mathbf{y} = f(\mathbf{x}) = \mathbf{M}\mathbf{x}$. The matrix $\mathbf{M}$ is invertible iff this mapping is invertible. In that case, given $\mathbf{y}$, we can compute the corresponding $\mathbf{x}$ as $\mathbf{x} = \mathbf{M}^{-1}\mathbf{y}$.

Let $\mathbf{u}$, $\mathbf{v}$, $\mathbf{w}$ be 3D vectors that form the columns of $\mathbf{M}$. We know that $\det{\mathbf{M}} = \mathbf{u} \cdot (\mathbf{v} \times \mathbf{w})$, which is the volume of the parallelipiped having $\mathbf{u}$, $\mathbf{v}$, $\mathbf{w}$ as its edges.

Now let's consider the effect of the mapping $f$ on the "basic cube" whose edges are the three axis vectors $\mathbf{i}$, $\mathbf{j}$, $\mathbf{k}$. You can check that $f(\mathbf{i}) = \mathbf{u}$, $f(\mathbf{j}) = \mathbf{v}$, and $f(\mathbf{k}) = \mathbf{w}$. So the mapping $f$ deforms (shears, scales) the basic cube, turning it into the parallelipiped with sides $\mathbf{u}$, $\mathbf{v}$, $\mathbf{w}$.

Since the determinant of $\mathbf{M}$ gives the volume of this parallelipiped, it measures the "volume scaling" effect of the mapping $f$. In particular, if $\det{\mathbf{M}} = 0$, this means that the mapping $f$ squashes the basic cube into something flat, with zero volume, like a planar shape, or maybe even a line. A "squash-to-flat" deformation like this can't possibly be invertible because it's not one-to-one --- several points of the cube will get "squashed" onto the same point of the deformed shape. So, the mapping $f$ (or the matrix $\mathbf{M}$) is invertible if and only if it has no squash-to-flat effect, which is the case if and only if the determinant is non-zero.

$\endgroup$ 3 $\begingroup$I know this is pretty old, but for the people who might find this in a google search (I know I did), I thought I'd add this.

Remember that the space of all $n \times n$ matrices is isomorphic to the space of all operators on an $n$-dimensional vector space. In other words, the matrix is just some linear operator. Recall that the determinant is the product of the eigenvalues. Both $ \mathbb{C} $ and $ \mathbb{R} $ are integral domains so if the determinant is $0$ then that means you have a zero eigenvalue. That means, if your matrix is $A$, there exists a vector $0 \neq \overline{v} $ such that $ (A-0I)\overline{v}=\overline{0} $ which means $ A\overline{v}=\overline{0} $. Clearly $ \overline{0} $ gets sent to $ \overline{0} $ but so does some other non-zero vector. So the transformation isn't injective and, thus, non-invertible. This is just the intuition though.

$\endgroup$ 2 $\begingroup$The absolute value of the determinant of a matrix is the volume of the parallelepiped spanned by the column vectors of that matrix.

Michael

$\endgroup$ 3 $\begingroup$Another classical way is more understandable: note that a determinant is not changed if we add one row to other and one column to another. Thus we obtain a diagonal matrix $B$. This matrix differs from $A$ by matrix-multipliers which correspond to elementary transformations and are invertible. So $A$ is invertible iff $B$ is invertible iff $\det(B) \neq 0$ iff $\det(A) \neq 0$.

$\endgroup$ 4 $\begingroup$I personally think of the determinant as a function $f(A)$ that has the following three properties:

$f(AB) = f(A) f(B)$

$f(T)$ = product of diagonals for triangular matrix $T$

$f(E) \neq 0$ for an elementary matrix $E$

An elementary matrix $E$ is a matrix such that $EA$ either multiples a row, swaps a row, or swaps and adds a row of $A$.

Now Gaussian elimination is the process of applying elementary matrixes to $A$ and obtaining a upper triangular matrix. That is, every matrix can be written as $$ A = (E_1 \cdots E_n)^{-1} T$$

Which means $$f(A) = f(E_1)^{-1} \cdots f(E_n)^{-1} f(T)$$ and is nonzero if and only if $f(T)$ is nonzero. But a matrix is invertible if and only if the gaussian eliminated form $T$ has nonzero elements in the diagonal. That is, exactly, that $f(T) \neq 0$.

In my mind, any other definitions of the determinant, such as the practical way of computing it with adjunct matrices and such, are not the "real" or "primary" definition of the determinant. We want the determinant function to satisfy the above three properties. We also probably want the determinant to be a polynomial in the entries of our matrix. This then should give us the method of computing the determinant.

$\endgroup$ $\begingroup$With all of the elegant ways of thinking about the determinant, I fear it is sometimes not emphasized enough that the easiest way to discover the determinant is to just solve the linear system\begin{align} a_{11} x_1 + a_{12} x_2 &= b_1 \\ a_{21} x_1 + a_{22} x_2 &= b_2 \end{align}by hand. When you do this, out pops the determinant! And we see immediately that if the determinant is $0$ then our solution doesn't work (because then we would be dividing by $0$). A similar computation can be done for $3 \times 3$ systems, etc.

This is probably how the determinant was first discovered. It is so easy that a middle school student can do it, and at the moment of discovery we see that a nonzero determinant guarantees that $Ax = b$ has a unique solution.

$\endgroup$ $\begingroup$Consider $A$, an $n$ x $n$ matrix to be a mapping from $R^n$ to $R^n$. If the determinant is zero, the columns of $A$ are linearly dependent. This means that the nullspace of $A$ is nonempty. Hence the linear mapping $A$ is non-invertible, since several $x$ get mapped to the same value b, i.e there are multiple solutions (infinite in fact) to $Ax=b$ when a single solution exists. Example - both $x$ and $x+v$ get mapped to b, where $v$ is a vector in the nullspace of $A$

$\endgroup$