I want to extract link from a HTML page to download my images, and I have several thousand of these HTML files. How do I go about this?

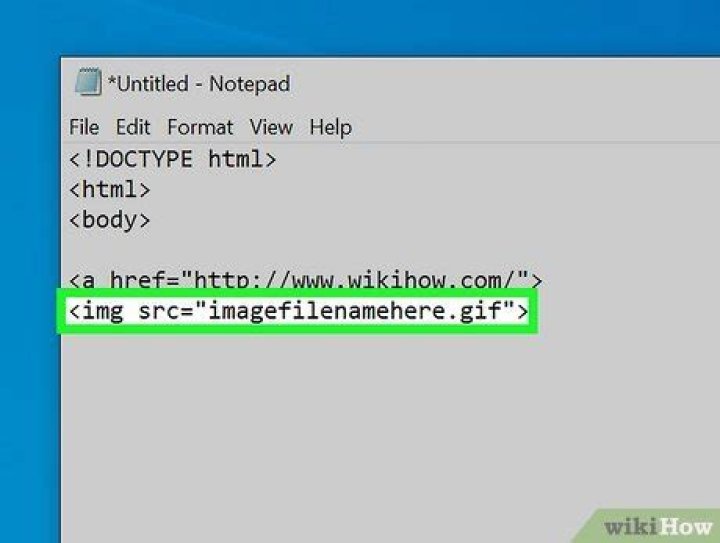

so I have HTML files which have a specific portion I'd like to extract. These HTML addresses are in a text file. A sample HTML webpage, taken from this textfile would look like this, and I'd want to get part 009514HB.JPG which is different for every HTML file.

My .txt file would be something like this -

and if I click open one of those html pages using a text editor I can find the information I need..

*** some code here***

<figure> <a href="/imagelibrary/large/009514HB.JPG" target="_blank"><img src="/imagelibrary/medium/009514HB.JPG" alt="acne keloidalis nuchae"/></a>

</figure>

...Now I would like to get these numbers from various HTML files, and then append these numbers to . For example, I would want my final txt file to have urls which are like this, [slightly NSFW] This line would be easier for me to wget the images! I don't know a lot about SED or AWK so any sort of advice/help would be great.

Thanks!

tl;dr: The links point to a webpage not an image, so when I wget I'm downloading html pages rather than images I want. This is how I think I could do it but any better solutions would be helpful too!

12 Answers

Depending on the complexity of the input files I suggest

not trying to parse HTML with awk, grep and such but

to use an HTML parser. For similar tasks I use lynx, the text mode browser. To install it a

simple sudo apt install lynx is sufficient. Then:

for file in *.html; do lynx -dump -listonly -nonumbers $file >> links.txt

doneFor your sample snippet it creates the following output:

file:///imagelibrary/large/009514HB.JPGWhen done, the file:// part needs to be replaced with a proper base URL:

sed -i 's|file://| links.txtResult:

2Breaking it down into steps, you want to:

- Process a bunch of files (named

*.html?). - Extract lines like

<a href="/imagelibrary/large/009514HB.JPG" .... - Extract the filename part ("

009514HB.JPG"). Produce text using the filename part.

find . -type f -name '*.html' -print0 | \ xargs -0 -r grep --no-filename "a href=" | \ grep -E -o '[0-9A-Z]+\.JPG'

Then, by wrapping the above inside a for $() construct, we get:

for i in $( find . -type f -name '*.html' -print0 | \ xargs -0 -r grep --no-filename "a href=" | \ grep -E -o '[0-9A-Z]+\.JPG' ) ; do echo ""

doneOf course, read man find, man xargs, man grep.