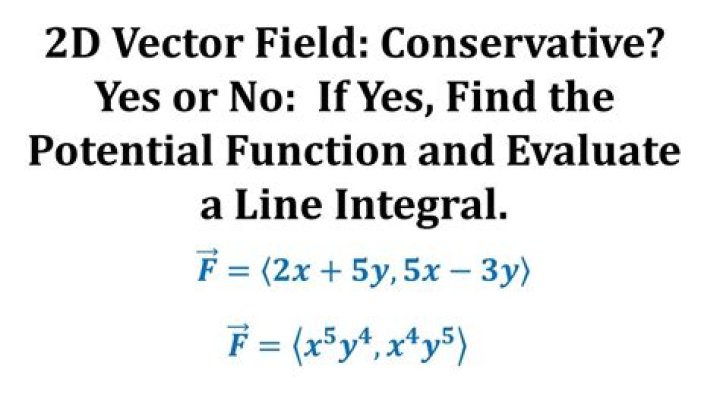

Function of a vector vs function of variables

If I have a function $f$ st $f$ is a function of the vector

$\vec{x}=(x_1,..x_n)$, i.e $f=g[\vec{x}]=g[(x_1,..x_n)]$

Is there any differnce between this and $f$ being a function of the variables $x_1,...,x_n$ i.e $f=g(x_1,...x_n)$

$\endgroup$ 13 Answers

$\begingroup$The usual way to define (from sets) a function of several variables $x_1, x_2, \ldots, x_n$ is to use the Cartesian product $X_1 \times X_2 \times\dots\times X_n$ of the respective sets $X_1, X_2, \ldots, X_n$ in which the variables are allowed to take values, as the domain of the function. Or sometimes, it is only possible/relevant to take some proper subset of this Cartesian product as the domain.

So if the function is called $f$, I guess technically $f\left( (x_1, x_2, \ldots, x_n )\right)$ is the formally correct notation for a value of this function, if we agree to use round parentheses for members of the Cartesian product (tuples, or "vectors" as we may call them (even if we require no linear structure)), and also use round parentheses for function application $f(\cdot )$.

So you could say that:

$$f(x_1, x_2, \ldots, x_n )$$

is just a shorter notation for:

$$f\left( (x_1, x_2, \ldots, x_n )\right)$$

Therefore, my answer would be, no there is no difference.

$\endgroup$ $\begingroup$In mathematical analysis, a function is a relation between two affine spaces, so there technically speaking is no such thing as a function of multiple variables. So if $ X $ and $ Y $ are affine spaces, a function is just $ f : X \to Y $, so for any $ \vec a \in X $, you get $ f : \vec a \mapsto f ( \vec a) $. What you understand as function of varibles $ x_1, x_2, \dots, x_n $ is actually a function of a vector $ \vec x \in \mathbb R^n $, whose components are $ \vec x^i = x_i $ (superscript notation for vector components, subscript for sequence members).

tl;dr: Yes, they are the same.

EDIT: The spaces don't have to be affine if you don't want to do things such as derivatives.

$\endgroup$ $\begingroup$I am going to take a dissenting view. There is a difference between functions of vectors and a function over several variables. Vectors have an inherent geometric meaning in that they come from a vector space with topological and algebraic structure which relates the coordinates in the vector. For a function of several variables, we do not inherently get the relationship between coordinates.

Let us consider the following example: Let $f:\mathbb R^2\to\mathbb R$ be defined by $$f(\vec x)=\langle\vec x,\vec a\rangle,$$ where $\vec a$ is some constant vector, and $\langle\cdot,\cdot\rangle$ is the standard inner product on $\mathbb R^2$. Find $$\Vert\nabla f(\vec x)\Vert.$$ It is not hard to see that the answer is $\Vert \vec a\Vert$. This answer should be independent of any coordinate system because vector spaces do not have intrinsic coordinates. Indeed, I was careful to define the function in coordinate-free form so as to avoid making a choice of coordinates. So if we represent this function in, say, polar coordinates we should get the same answer. In standard polar coordinates, this function still takes a vector as its input, but we usually write it like a function of two variables: $$f(r,\theta)=a_1r\cos\theta+a_1r\sin\theta,$$ where $a_1$ and $a_2$ are the Cartesian coordinates of $\vec a$. Knowing that in Cartesian coordinates, the gradient takes the form $$\nabla f(\vec x)=\frac{\partial}{\partial x_1} f(\vec x)+\frac{\partial}{\partial x_2}f(\vec x),$$ many a beginning multivariable calculus student is tempted to write $$\nabla f(r,\theta)=\frac{\partial}{\partial r} f(r,\theta)+\frac{\partial}{\partial\theta}f(r,\theta).$$ But this not correct. if we try it, we get $$\color{red}{\Vert\nabla f(r,\theta)\Vert=\sqrt{(a_1\cos\theta+a_2\sin\theta)^2+(-a_1r\sin\theta+a_2r\cos\theta)^2}},$$ which depends on $r$ and $\theta$, so it is wrong (which is why I put it in red). The reason that this is not correct is that taking the gradient in this way presupposes that $f$ is a function of two variables $r$ and $\theta$, rather than a function of the vector $\vec x$. The more advanced multivariable calculus student knows that if $f$ is a function of a vector represented in polar coordinates, then the gradient takes the form $$\nabla f(r,\theta)=\frac{\partial}{\partial r} f(r,\theta)+\frac{1}{r}\frac{\partial}{\partial\theta}f(r,\theta).$$ After taking the correct gradient, we see that even when calculated in polar coordinates, $$\Vert\nabla f(\vec x)\Vert=\Vert\vec a\Vert.$$ The takeaway here is that when treating my function of a vector as a function of two variables, I had to remember that the two variables are related to each other.

In summary, a function of a vector can be described as a function of several variables, but we need to be careful to remember the geometric meaning of these variables in relation to each other. A function which is just a function of several variables, and not a function of a vector has no such restrictions, but there is no notion of gradient for such a function.

$\endgroup$