Factoring A Matrix Polynomial [duplicate]

I'm working my way through this paper: Down with Determinants!

In Section 2 (pretty much right off the bat) he gives his determinant-less proof that every finite-dimensional complex linear operator has an eigenvalue. First he says that a vector and its images under the transformation, repeated n times, can't be linearly independent, and defines $a_i$ to be the coefficients of the linear combination.

$$ a_0v + a_1Tv + \cdots + a_nT^nv=0 $$

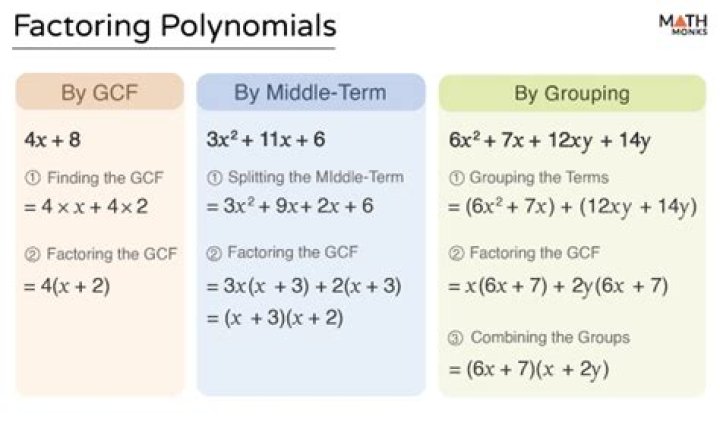

Then he makes a (non-matrix) polynomial of those coefficients and factors it.

$$ a_0 + a_1z + \cdots + a_nz^n = c(z-r_1)\cdots(z-r_m) $$

The above holds for any complex number $z$. I understand everything so far, but then he has this step, which seems to use the first equation as a polynomial of matrices:

$$ 0=(a_oI + a_1T + \cdots + a_nT^n)v = c(T-r_1I)\cdots(T-r_mI)v $$

This seems totally non-obvious to me. I can sort of see this as an analogy with the factorization of the regular polynomial, and I verified it by hand with a generic 2-dimensional T, but I'm not sure why it works in the general case.

My main questions:

- What justifies the last step? Does it necessarily use the 2nd equation, or is that just sort of by analogy?

- In the last part, we've basically factored a polynomial of matrices. The "roots" are all constants times the identity matrix. Is this always true, or could the roots be any matrix?

1 Answer

$\begingroup$To simplify my life, I will take the coefficients below (example, $p_k$) as being defined for all $k$ but only non zero for a finite number of non negative integers.

If $p(x) = \sum_k p_k x^k$ we define $p(A) = \sum_k p_k A^k$.

For the first question:

If $p(x) = a(x)b(x)$, where $a,b$ are polynomials, then $p(x) = \sum_i a_i x^i \sum_j b_j x^{j} = \sum_i \sum_j a_i b_j x^{i+j} = \sum_k \sum_i a_i b_{k-i} x^k$, hence $p_k = \sum_i a_i b_{k-i}$ (this follows since the functions $x \mapsto x^k$ are linearly independent).

Then we have $p(A) = \sum_k \sum_i a_i b_{k-i} A^k = \sum_i \sum_i a_i b_j A^{i+j} = \sum_i a_i A^i \sum_j b_j A^{j} = a(A) b(A)$.

It is easy to see that since the $A^k$ commute that $a(A)b(A) = b(A) a(A)$.

Consequently, if I write $p(x) = f_1(x)\cdots f_m(x)$, where the $f_k$ are polynomials, then $p(A) = f_1(A) \cdots f_m(A)$. (Also, the order doesn't matter.)

If we have $p(z) = a_0 + a_1z + \cdots + a_nz^n = c(z-r_1)\cdots(z-r_m)$, then it follows that $p(T) =a_0 + a_1 T + \cdots + a_n T^n = c(T-r_1 I)\cdots(T-r_m I)$. Since you have $p(T)v = 0$, the result follows.

For the second question:

Suppose $p(A) = 0$ for some matrix $A$. Then any eigenvalue $\lambda$ of $A$ satisfies $p(\lambda) = 0$. To see this, suppose $V^{-1}A V = J$, where $J$ is the Jordan normal form. Then you can see that $p(A) = p(V J V^{-1}) = V p(J) V^{-1}$, so we have $p(J) = 0$. (In fact, we have $p(J_i) = 0$ for all of the Jordan blocks.) Now note that the diagonal elements of $p(J)$ are $p(\lambda_k)$, where the $\lambda_k$ are the eigenvalues of $J$ (that is, $A$), and so $p(\lambda_k) = 0$.

As an aside, since $p(J_i) = 0$ for all of the Jordan blocks, a little work shows that if $J_i$ is of size $d \times d$ and the eigenvalue is $\lambda$, then $p^{(i)}(\lambda) = 0$, for $i=0,...,d-1$, so in fact we see that $x \mapsto (x-\lambda)^d$ divides $p$.

$\endgroup$ 8