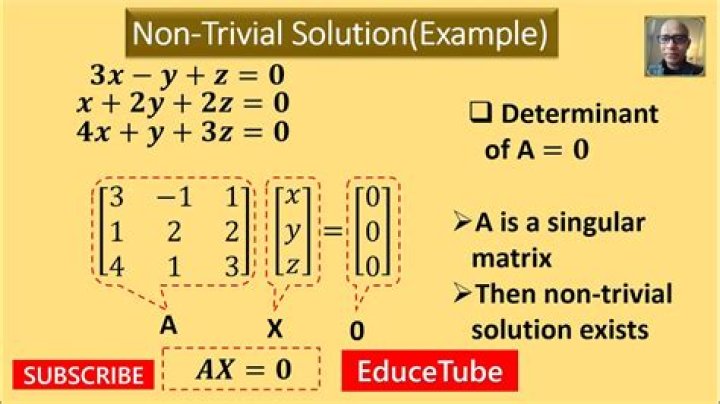

Existence of non-trivial solutions for $Ax=0$

I would like to prove the following claim:

For a square matrix $A$ there exists a non-zero vector $x$ such that $Ax=0$ if and only if $A$ is singular.

Is this claim correct?

I was able to show that if $x$ exists, $A$ is singular.

How to prove right-to-left part? (i.e, if $A$ is singular, there is a non-zero $x$ s.t. $Ax=0$).

UPD:

I'm assuming the following definition:

Matrix $A$ is singular if it is not invertible. (i.e, there does not exist matrix $B$ s.t. $AB=BA=I$(indentity matrix)).

I also know that $A$ is singular iff $det(A)=0$

$\endgroup$ 23 Answers

$\begingroup$Let us assume that $A$ is singular. This means that for some $x \ne y$ we have $Ax = Ay$ (otherwise, see what $A$ does to a vector space basis and you'll see that it would have to be nonsingular).

What can you say about $A(x - y)$?

$\endgroup$ 1 $\begingroup$this claim is correct! If A is singular, then the column vectors of A are not linearly independent. (They are linearly dependent).

If $A = [\vec{u_1},\vec{u_2},\dots \vec{u_n}]$,

$\exists$ $\alpha_1,\alpha_2,\dots, \alpha_n \in \mathbb{F}$, ($\mathbb{F} = \mathbb{R} \text{ or } \mathbb{F} = \mathbb{C}$) probably in your case

such that not all of the $\alpha_i$ are zero:

$$

\vec{u_1}\alpha_1 + \vec{u_2}\alpha_2+\dots + \vec{u_n} \alpha_n =\vec{0}

$$

So take your vector: $\vec{x}$ to be $\vec{x}$:= $[\alpha_1,\alpha_2,\dots \alpha_n]^{T}$

$\endgroup$ 3 $\begingroup$$A$ singular $\iff \det(A)=0$. The characteristic polynom of $A$ is $\det(A-\lambda I)$, so iff $\det(A)=0$ then $0$ is an eigenvalue of $A$. Then by definition $\ker(A)\neq\{0\}$.

$\endgroup$