Derivation of the multivariate chain rule

I can't believe I couldn't find this information online, but could someone provide me a good proof of the multivariate chain rule ? \begin{align} \frac{df}{dt} = \frac{df}{dx}\frac{dx}{dt} + \frac{df}{dy}\frac{dy}{dt} \end{align}

I found multiple derivation of this results online using differentials and mean value theorem, but they don't look like rigorous to me. Somehow dividing the differential by $dt$ doesn't make it rigorous for my point of view...

This question comes in a more general context where I am trying to understand why deriving a composition is effectively a matrix product. So by understanding this formula, I am able to see why building matrix of derivatives is a good tool to compute derivatives by matrix multiplication.

Thanks !

$\endgroup$ 52 Answers

$\begingroup$Presumably we are saying that $f$ is a function of $x$ and $y$ (i.e., $f(x, y)$), which are both functions of $t\ \ $ ($x(t)$ and $y(t)$). So what does it mean to write $df/dt$? This is really the derivative of another function $F$ defined by

$$F(t) = f(x(t), y(t)).$$

Define the function $g$ by $g(t) = (x(t), y(t))$ so that $F(t) = f(g(t)) = f \circ g(t)$.

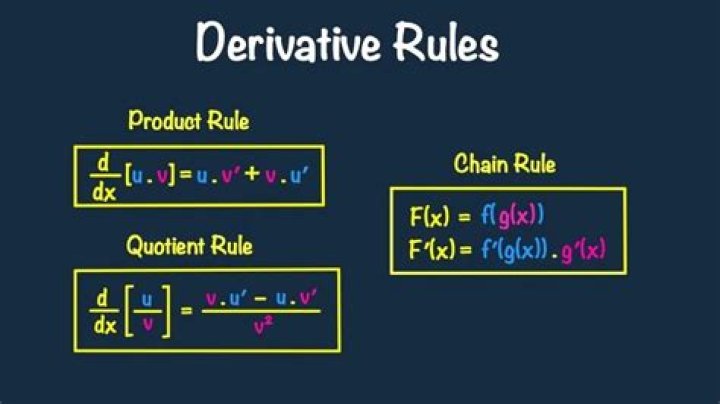

Recall the multivariable chain rule.

Theorem (Multivariable Chain Rule). Suppose $g\colon \mathbf{R}^n \to \mathbf{R}^m$ is differentiable at $a \in \mathbf{R}^n$ and $f\colon \mathbf{R}^m \to \mathbf{R}^p$ is differentiable at $g(a) \in \mathbf{R}^m$. Then $f \circ g\colon \mathbf{R}^n \to \mathbf{R}^p$ is differentiable at $a$, and its derivative at this point is given by $$D_a(f \circ g) = D_{g(a)}(f) \ D_a(g).$$

You can find a proof of this in, e.g., Calculus on Manifolds (Spivak). Back to the problem at hand: how do we use the chain rule to prove that

$$\frac{df}{dt} = \frac{\partial f}{\partial x}\frac{dx}{dt} + \frac{\partial f}{\partial y}\frac{dy}{dt}?$$

Well, let's try writing this in terms of a "matrix" product,

$$\frac{df}{dt} = \begin{bmatrix}\dfrac{\partial f}{\partial x} & \dfrac{\partial f}{\partial y}\end{bmatrix}\begin{pmatrix}dx/dt\\dy/dt\end{pmatrix}.$$

But this is exactly what the chain rule states when applied to the function $F = f \circ g$. We have that

- $D_a(f \circ g) = D_a(F) = \dfrac{dF}{dt}$ (evaluated at some point $a$)

- $D_{g(a)}(f) = \begin{bmatrix}\dfrac{\partial f}{\partial x} & \dfrac{\partial f}{\partial y}\end{bmatrix}$ (each term evaluated at $g(a)$)

- $D_a(g) = \displaystyle \begin{pmatrix}dx/dt\\dy/dt\end{pmatrix}$ (each term evaluated at $a$)

where we have assumed differentiability of the maps.

$\endgroup$ 2 $\begingroup$I also found most of the answers in the net circular and assuming the multiplication of two vectors for multivariable derivatives without proving it explicitly.

So I went back to basics and used the definition of derivative which is f(x+e) - f(e) /e when e tends to zero. Which means f(x+e) ~ f(x) + e*df/dx.

When applied to f(x, y), then f(x+e1, y+e2) ~ f(x, y+e2) + e1*df/dx ~ f(x, y) + e1*df/dx + e2*df/dy (1)

Also x = x(t) and y = y(t). So x(t+e) ~ x(t) + edx/dt. And y(t+e) ~ y(t) + edy/dt (2)

(by the way, here you can define a vector z =[x, y] and assume by convention if z, x and y are functions of t that z(t+e) = [x(t+e), y(t+e)] ~ z(t) + e*[dx/dt, dy/dt]. Here the array things start coming).

Finally, f(t+e) = f(x(t+e), y(t+e)) and as per (2) f(t+e) ~ f(x(t) + edx/dt, y(t) + edy/dt) and as per (1) f(t+e)~ f(x(t), y(t)) + edx/dtdf/dx + edy/dtdf/dx = f(x, y) + e*(dx/dt*df/dx + dy/dt * df/dy).

But also f(t+e) ~ f(t) + e*df/dt.

So when e tend to zero, we got df/dt = df/dxdx/dt + df/dydy/dt.

Not very rigorous proof though.

$\endgroup$ 1