Could not access KVM kernel module while building SONiC NOS on vm with vagrant

I'm trying to build sonic build image for virtual switch from on ubuntu 16.04/xenial vm with vagrant, but I've got an error:

++ on_error

++ echo '============= kvm_log =============='

============= kvm_log ==============

++ cat /tmp/tmp.3CIfxaYzCK

Could not access KVM kernel module: No such file or directory

qemu-system-x86_64: failed to initialize KVM: No such file or directory

+ on_exit

+ rm -f /tmp/tmp.3CIfxaYzCK

[ FAIL LOG END ] [ target/sonic-vs.img.gz ]

make: *** [slave.mk:793: target/sonic-vs.img.gz] Error 1

Makefile.work:224: recipe for target 'all' failed

make[1]: *** [all] Error 2

make[1]: Leaving directory '/home/vagrant/sonic-buildimage'

Makefile:7: recipe for target 'all' failed

make: *** [all] Error 2I've made all prerequisites as it is mentioned in build guide and I'm using 202006 release branch.

I have done some research before and I thought there may be some troubles with kvm. But when I try sudo modprobe kvm-intel on vm it says: modprobe: ERROR: could not insert 'kvm_intel': Operation not supported

Result from dmesg | grep kvm:

[ 0.000000] kvm-clock: Using msrs 4b564d01 and 4b564d00

[ 0.000000] kvm-clock: cpu 0, msr 1:96fef001, primary cpu clock

[ 0.000000] kvm-clock: using sched offset of 3780522329 cycles

[ 0.000000] clocksource: kvm-clock: mask: 0xffffffffffffffff max_cycles: 0x1cd42e4dffb, max_idle_ns: 881590591483 ns

[ 0.431418] kvm-clock: cpu 1, msr 1:96fef041, secondary cpu clock

[ 0.445819] kvm-clock: cpu 2, msr 1:96fef081, secondary cpu clock

[ 0.460479] kvm-clock: cpu 3, msr 1:96fef0c1, secondary cpu clock

[ 0.670955] clocksource: Switched to clocksource kvm-clock

[ 111.115672] kvm: no hardware support

[10515.351145] kvm: no hardware support

[11873.537344] kvm: no hardware supportResult from lsmod:

Module Size Used by

nft_meta 16384 11

nft_counter 16384 15

nft_chain_nat_ipv4 16384 4

nft_compat 20480 4

nf_tables_ipv6 16384 4

nf_tables_ipv4 16384 5

nf_tables 69632 37 nf_tables_ipv4,nf_tables_ipv6,nft_chain_nat_ipv4,nft_compat,nft_meta,nft_counter

veth 16384 0

xt_CHECKSUM 16384 1

iptable_mangle 16384 1

ipt_REJECT 16384 2

nf_reject_ipv4 16384 1 ipt_REJECT

xt_tcpudp 16384 6

ebtable_filter 16384 0

ebtables 32768 1 ebtable_filter

ip6table_filter 16384 0

ip6_tables 28672 1 ip6table_filter

kvm 561152 0

irqbypass 16384 1 kvm

xt_conntrack 16384 3

ipt_MASQUERADE 16384 5

nf_nat_masquerade_ipv4 16384 1 ipt_MASQUERADE

nf_conntrack_netlink 40960 0

nfnetlink 16384 4 nf_tables,nft_compat,nf_conntrack_netlink

xfrm_user 32768 1

xfrm_algo 16384 1 xfrm_user

xt_addrtype 16384 4

iptable_filter 16384 1

iptable_nat 16384 1

nf_conntrack_ipv4 20480 4

nf_defrag_ipv4 16384 1 nf_conntrack_ipv4

nf_nat_ipv4 16384 2 nft_chain_nat_ipv4,iptable_nat

nf_nat 28672 2 nf_nat_ipv4,nf_nat_masquerade_ipv4

nf_conntrack 106496 6 nf_nat,nf_nat_ipv4,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_ipv4

ip_tables 24576 3 iptable_filter,iptable_mangle,iptable_nat

x_tables 36864 13 ip6table_filter,xt_CHECKSUM,ip_tables,xt_tcpudp,ipt_MASQUERADE,nft_compat,xt_conntrack,iptable_filter,ebtables,ipt_REJECT,iptable_mangle,ip6_tables,xt_addrtype

br_netfilter 24576 0

bridge 122880 1 br_netfilter

stp 16384 1 bridge

llc 16384 2 stp,bridge

aufs 217088 0

vboxsf 49152 1

overlay 49152 0

isofs 40960 0

input_leds 16384 0

serio_raw 16384 0

vboxguest 286720 2 vboxsf

video 40960 0

binfmt_misc 20480 1

ib_iser 49152 0

rdma_cm 49152 1 ib_iser

iw_cm 45056 1 rdma_cm

ib_cm 49152 1 rdma_cm

ib_sa 36864 2 rdma_cm,ib_cm

ib_mad 49152 2 ib_cm,ib_sa

ib_core 106496 6 rdma_cm,ib_cm,ib_sa,iw_cm,ib_mad,ib_iser

ib_addr 20480 2 rdma_cm,ib_core

iscsi_tcp 20480 0

libiscsi_tcp 24576 1 iscsi_tcp

libiscsi 53248 3 libiscsi_tcp,iscsi_tcp,ib_iser

scsi_transport_iscsi 102400 4 iscsi_tcp,ib_iser,libiscsi

autofs4 40960 2

btrfs 995328 0

raid10 49152 0

raid456 106496 0

async_raid6_recov 20480 1 raid456

async_memcpy 16384 2 raid456,async_raid6_recov

async_pq 16384 2 raid456,async_raid6_recov

async_xor 16384 3 async_pq,raid456,async_raid6_recov

async_tx 16384 5 async_pq,raid456,async_xor,async_memcpy,async_raid6_recov

xor 24576 2 btrfs,async_xor

raid6_pq 102400 4 async_pq,raid456,btrfs,async_raid6_recov

libcrc32c 16384 1 raid456

raid1 40960 0

raid0 20480 0

multipath 16384 0

linear 16384 0

crct10dif_pclmul 16384 0

crc32_pclmul 16384 0

ghash_clmulni_intel 16384 0

aesni_intel 167936 0

aes_x86_64 20480 1 aesni_intel

lrw 16384 1 aesni_intel

gf128mul 16384 1 lrw

glue_helper 16384 1 aesni_intel

ablk_helper 16384 1 aesni_intel

cryptd 20480 3 ghash_clmulni_intel,aesni_intel,ablk_helper

mptspi 24576 1

scsi_transport_spi 32768 1 mptspi

mptscsih 40960 1 mptspi

psmouse 131072 0

mptbase 102400 2 mptspi,mptscsih

e1000 135168 0Also I am using 6.0.22r137980 version of VirtualBox

1 Answer

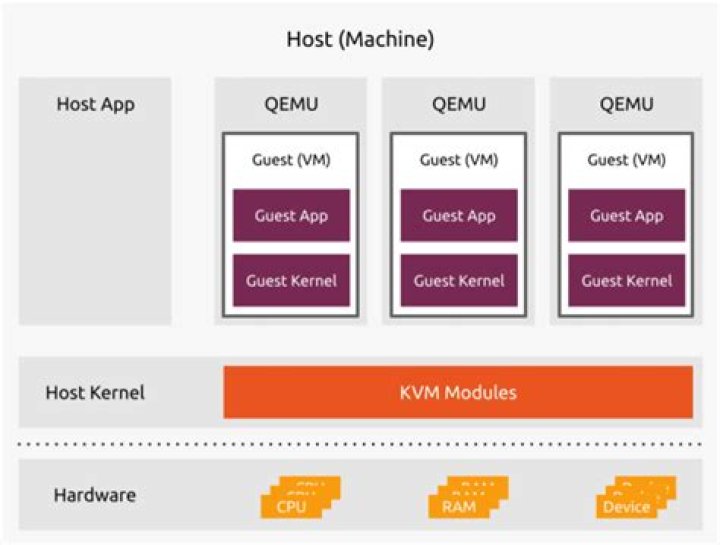

You will need to enable nested virtualization which for a long time wasn't possible at all with the default provider for vagrant being virtualbox.

Once you enable nesting your reported issue should be resolved. You'll find plenty of howto's on the internet, here a few to give you a start: