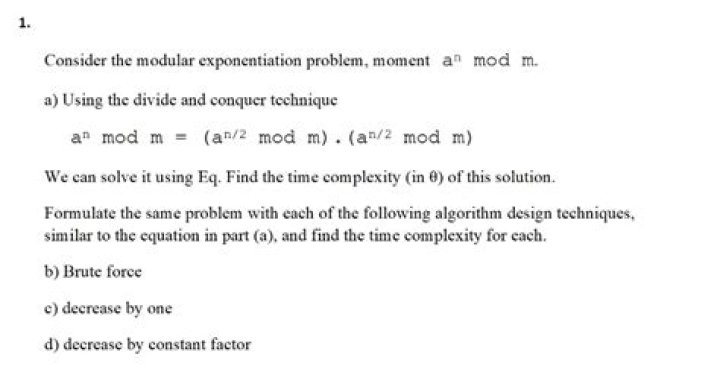

Computational Complexity of Modular Exponentiation (from Rosen's Discrete Mathematics)

This is a block of code from Kenneth Rosen's Discrete Mathematics book, for calculating $b^n \mod m$, and it says that:

The number of bit operations should be big-O of$\mathcal{O} \left ( \left (\log(m) \right )^2 \cdot \log(n) \right)$.

I understand that, there are $k$ (which is the length in bits of the binary form of $n$) runs of the loop, so that there is a $\log(n)$ term, but I don't see where the $\left (\log(m) \right)^2$ term is coming from.

It seems like they are saying the two lines of the for loop, each perform a modular division with an integer $m$, whose length is $\log(m)$, but I am unsure if $(x \cdot power)$ and $(power \cdot power)$ should be considered the integer $m$. Or why they would be multiplied instead of just $2\log(m)$?

Thanks for the help!

$\endgroup$1 Answer

$\begingroup$For $a\times b \equiv \bmod m$ they use a quadratic multiplication / reduction algorithm with a complexity of $O(\log(m)^2)$. Multiply this with the number of loops, i.e. $k=\log(n),$ and you get $O(\log(m)^2 \times \log(n)).$

Note that the square power*poweris computed $k$ times, but x*power only $k/2$ on average (depending on the bit count of $a$).