Are log files written to when a buffer fills or in 'realtime'?

I have a script running which pipes its output through another script; that wrapper script adds a timestamp to each line of the log. However the data is being written to the log file in chunks of about 8000 characters (roughly 180 lines). This is causing all of those lines to be timestamped exactly the same. If the underlying script that is outputting info is run directly in the shell then the outputs appear line by line in real time.

For reference the script looks like this:

#!/bin/bash

python foo.py | ~/timestamp.sh >> ~/logs/foo.log

exitThe timestamp script just adds a, you guessed it, timestamp to every line of output. That script contains the following:

#!/bin/bash

while read x; do echo -n `date +%d/%m/%Y\ %H:%M:%S`; echo -n " "; echo $x;

doneIf I remove the timestamp.sh from the equation the behaviour is exactly the same. I checked this by running the script and monitoring the log file using tail -f script.log

Is there a setting that buffers file output before writing it to log files? Are there other methods I can try to timestamp each line of a log file? I have had this type of thing work before on previous systems but no matter what I try nothing seems to be sorting this.

This is all on Ubuntu 14.04.4 x64

22 Answers

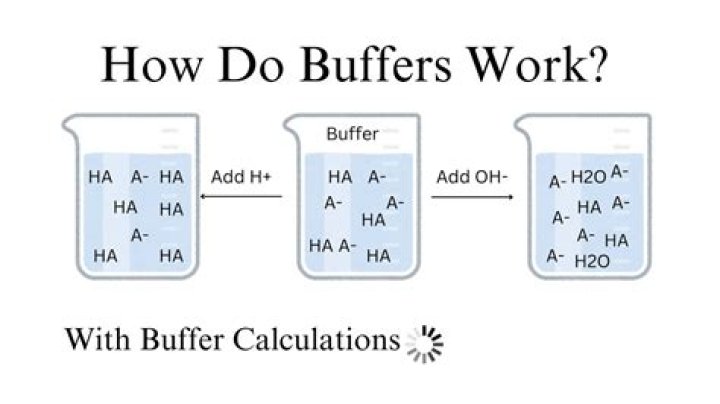

The root cause is your process, here python, is using libc stdout where the output is line buffered when output to a terminal but block buffered when output to something else, like a pipe here.

You can fix the issue either in the python code by explicitly flushing the buffer after each log output:

sys.stdout.flush()or by controlling how buffering will be done with some hack:

stdbuf -oL python foo.py | ~/timestamp.sh >> ~/logs/foo.logor

unbuffer python foo.py | ~/timestamp.sh >> ~/logs/foo.logI would recommend explicitly flushing the buffer in your code (sys.stdout.flush()), as you are the only one to know precisely where the output should be flushed. Line buffering (stdbuf -oL) is slightly less optimized but should be ok given the fact you timestamp per line, and disabling all buffering (unbuffer or heemayl's python -u suggestion) is the worst approach, as far as performance is concerned (although it might be unnoticeable depending on how your python code is writing its output).

As the STDOUT stream is block buffered by default (when not going to terminal) by python, you need to make the stream unbuffered (or line buffered).

python provides a way to make the streams unbuffered, here is the Pythonic way:

python -u foo.pySo, your whole command line becomes:

python -u foo.py | ~/timestamp.sh >> ~/logs/foo.logFrom man python:

-u Force stdin, stdout and stderr to be totally unbufferedI am now aware of your script, but within the script you can flush a certain stream using sys module:

sys.stdout.flush()